- cross-posted to:

- technology@lemmy.ml

- cross-posted to:

- technology@lemmy.ml

I think you’re a bullshitting con artist.

LLMs aren’t AI, let alone AGI.

They’re fucking prediction engines with extra functions.

Guys i think i just found AGI in my gramp’s old stuff.

Geez. You can almost smell the desperation on this guy.

Sure you do. It’s not at all a transparent attempt to prolong the bubble.

I only have a rather high level understanding of current AI models, but I don’t see any way for the current generation of LLMs to actually be intelligent or conscious.

They’re entirely stateless, once-through models: any activity in the model that could be remotely considered “thought” is completely lost the moment the model outputs a token. Then it starts over fresh for the next token with nothing but the previous inputs and outputs (the context window) to work with.

That’s why it’s so stupid to ask an LLM “what were you thinking”, because even it doesn’t know! All it’s going to do is look at what it spat out last and hallucinate a reasonable-sounding answer.

There’s no reason an LLM couldn’t be hooked up to a database, where it can save outputs and then retrieve them again to “think” further about them. In fact, any LLM that can answer questions about previous prompts/responses has to be able to do this. If you prompted an LLM to review all of it’s database entries, generate a new response based on that data, then save that output to the database and repeat at regular intervals, I could see calling that a kind of thinking. If you do the same process but with the whole model and all the DB entries, that’s in the region of what I’d call a strange loop. Is that AGI? I don’t think so, but I also don’t know how I would define AGI, or if I’d recognize it if someone built it.

If you prompted an LLM to review all of it’s database entries, generate a new response based on that data, then save that output to the database and repeat at regular intervals, I could see calling that a kind of thinking.

That’s kind of what the current agentic AI products like Claude Code do. The problem is context rot. When the context window fills up, the model loses the ability to distinguish between what information is important and what’s not, and it inevitably starts to hallucinate.

The current fixes are to prune irrelevant information from the context window, use sub-agents with their own context windows, or just occasionally start over from scratch. They’ve also developed conventional

AGENTS.mdandCLAUDE.mdfiles where you can store long-term context and basically “advice” for the model, which is automatically read into the context window.However, I think an AGI inherently would need to be able to store that state internally, to have memory circuits, and “consciousness” circuits that are connected in a loop so it can work on its own internally encoded context. And ideally it would be able to modify its own weights and connections to “learn” in real time.

The problem is that would not scale to current usage because you’d need to store all that internal state, including potentially a unique copy of the model, for every user. And the companies wouldn’t want that because they’d be giving up control over the model’s outputs since they’d have no feasible way to supervise the learning process.

Yeah I think for it to be a proper strange loop (if that is indeed a useful proxy for consciousness-- I think there’s room for debate on that) it would need to be able to take it’s entire “self” i.e. the whole model, weights, and all memories, as input in order to iterate on itself. I agree that it probably wouldn’t work for the current commercial applications of LLMs, but it not what being what commercial LLMs do, doesn’t mean it couldn’t be done for research purposes.

That’s what an LLM is, a database of words using vectors.

You’re still limited by the context window in your example, giving it another source of information doesn’t do anything than give more context.

Right, i mean if you made the context window enormous, such that you can include the entire set of embeddings and a set of memories (or maybe, an index of memories that can be “recalled” with keywords) you’ve got a self-observing loop that can learn and remember facts about itself. I’m not saying that’s AGI, but I find it somewhat unsettling that we don’t have an agreed-upon definition. If a for-profit corporation made an AI that could be considered a person with rights, I imagine they’d be reluctant to be convincing about it.

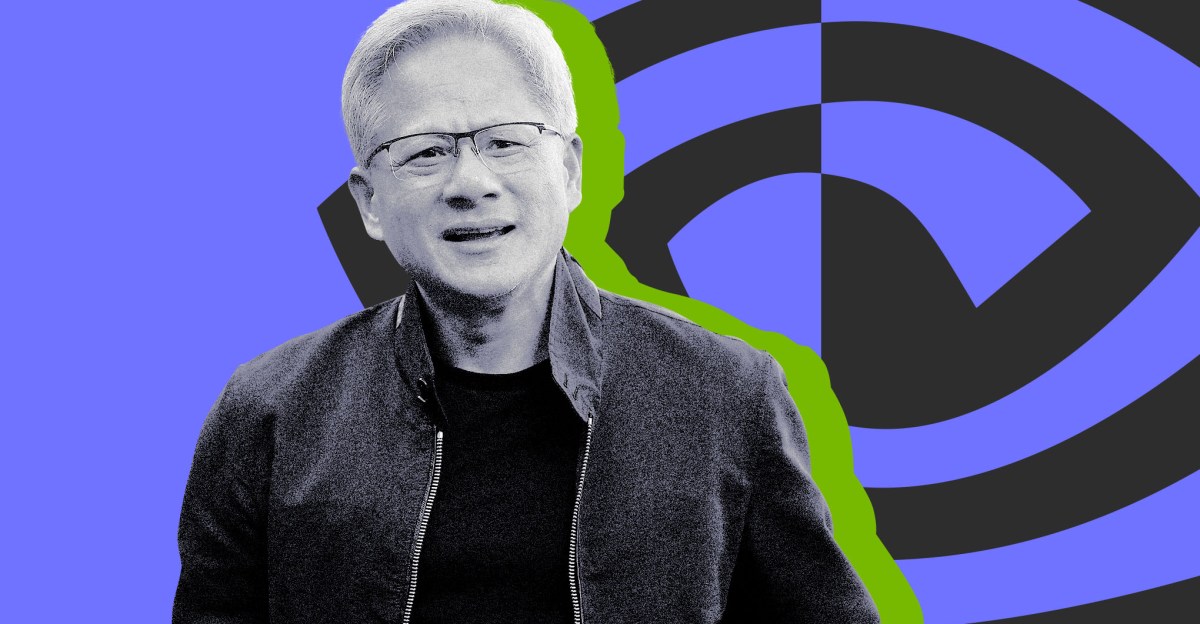

So why do we need Jensen Huang?

Exactly. CEO is maybe the easiest job for an AI to take over, so an AGI is possibly the most perfect candidate for that role.

Put up or shut up, tech bro CEOs. Replace yourself if it’s so fucking amazing.

Fridman, the podcast’s host, defines AGI as an AI system that’s able to “essentially do your job,” as in start, grow, and run a successful tech company worth more than $1 billion. He then asks Huang when he believes AGI will be real — asking if it’s, say, five, 10, 15, or 20 years away — and Huang responds, “I think it’s now. I think we’ve achieved AGI.”

So we’ve achieved AGI in the sense that it could replace a nonsensical fart-sniffing clown, hyping a horde of morons into valuating a company at orders of magnitude its actual worth?

fart sniffer