…Previously, a creative design engineer would develop a 3D model of a new car concept. This model would be sent to aerodynamics specialists, who would run physics simulations to determine the coefficient of drag of the proposed car—an important metric for energy efficiency of the vehicle. This simulation phase would take about two weeks, and the aerodynamics engineer would then report the drag coefficient back to the creative designer, possibly with suggested modifications.

Now, GM has trained an in-house large physics model on those simulation results. The AI takes in a 3D car model and outputs a coefficient of drag in a matter of minutes. “We have experts in the aerodynamics and the creative studio now who can sit together and iterate instantly to make decisions [about] our future products,” says Rene Strauss, director of virtual integration engineering at GM…

“What we’re seeing is that actually, these tools are empowering the engineers to be much more efficient,” Tschammer says. “Before, these engineers would spend a lot of time on low added value tasks, whereas now these manual tasks from the past can be automated using these AI models, and the engineers can focus on taking the design decisions at the end of the day. We still need engineers more than ever.”

This doesn’t sound like they are using LLMs for processing their 3D models. The way it’s described in the article sounds a lot more like some machine learning model trained on physics simulations for aerodynamics.

Yes! The way those physics models are created is so cool. The article somewhat explains it, but it’s mostly a fluff-piece for things unrelated to genAI. More in-depth:

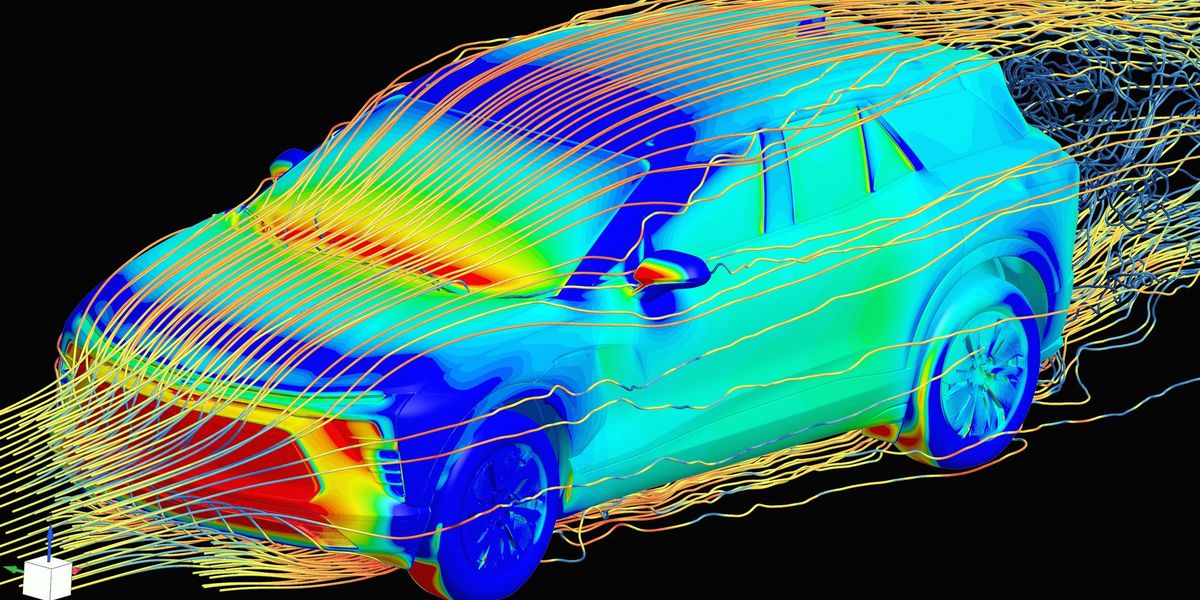

The physically accurate simulation is great but slow. So we can create a neural network (there’s a huge variety in shapes), and give it an example of physics, and tell it to make a guess as to what it’ll look like in, say, 1ms. We make it improve at this billions of times, and eventually it becomes “good enough” in most cases. By doing those 1ms steps in a loop, we get a full simulation. Because we chose the shape of it, we can pick a shape that’s quite fast to compute, and now we have a less-accurate but faster simulation.

The really cool thing is that sometimes, these models are better than the more expensive physics simulation, probably because real physics is logical and logical things are easier to learn.

We’ve done things like this for ages. One way we can improve them is by giving them multiple time steps. Unfortunately they kinda suck at seeing connections over time, so this is expensive. Luckily, transformers were invented! This is a neural network shape that is really good at seeing connections over one dimension, like time, while still being pretty cheap and really easy to do run in parallel (which is how you can go fast nowadays).

With a bunch of extra wiring, transformers also become GPT, i.e. text-based AIs. That’s why they suddenly got way better; they went from being able to see connections with words maybe 3-4 steps back, to recently a literal million. This is basically the only relationship with “AI” this has.