I can’t report because I haven’t validated them yet… I’m not going to send [the Linux kernel maintainers] potential slop

That’s worth pointing out IMO

Though that quote is followed by this, which indicates at least five of those vulnerabilities were real:

I searched the Linux kernel and found a total of five Linux vulnerabilities so far that Nicholas either fixed directly or reported to the Linux kernel maintainers, some as recently as last week:

I wonder how true that is. The author of this blog post seems to just be taking this guy’s word for it. Did Anthropic actually confirm the bug exists by trying to trigger it on real systems, or are they assuming it’s real because it looks plausible? The report claims you cam do it with two cooperating NFS clients, so did they actually do that, or are they just assuming it’ll work?

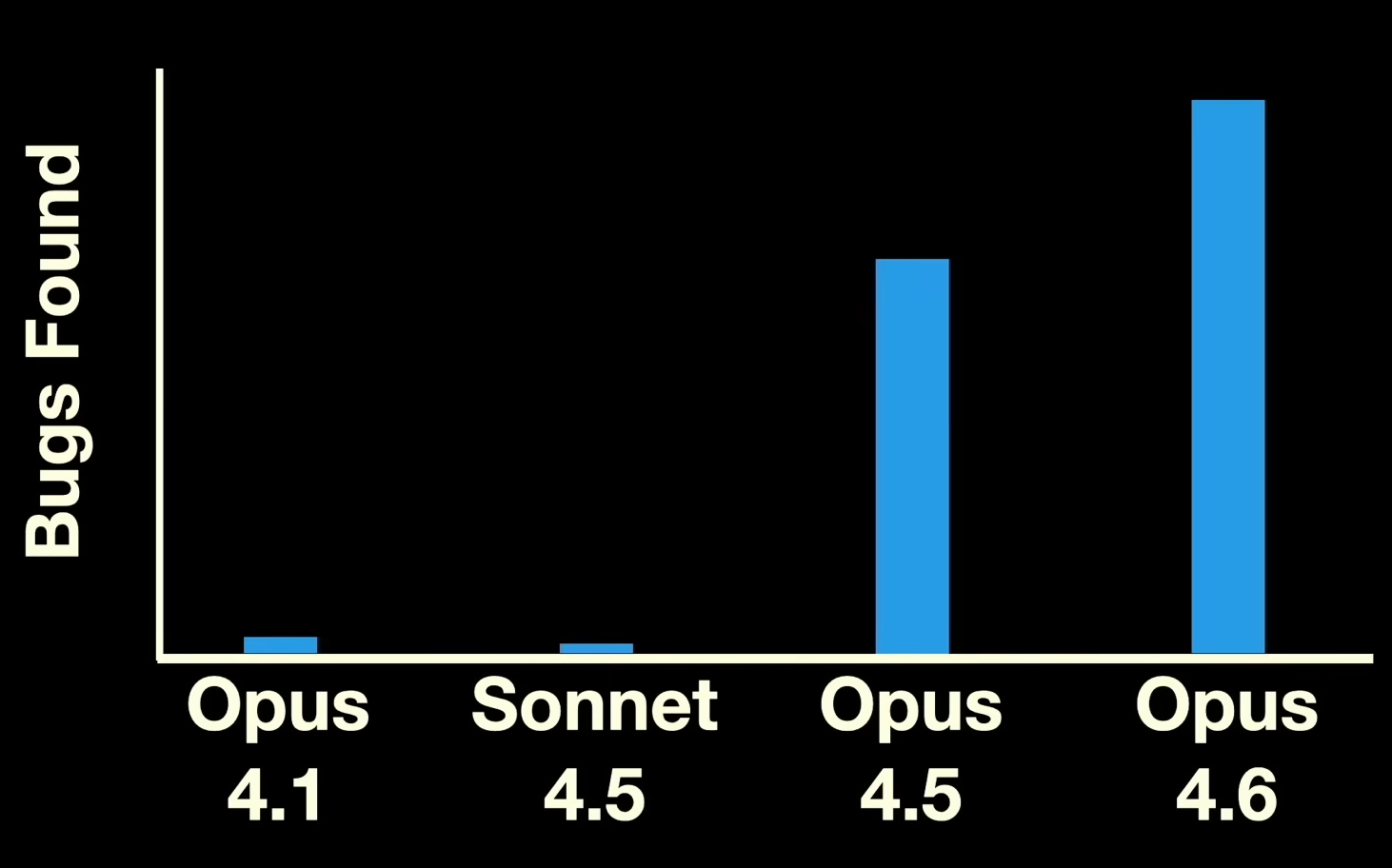

My favorite kind of graphs are ones where an entire axis is unlabeled:

You see this a lot with marketing graphs. They say nothing, but they’re designed to convince you that the graphs mean something.

Anyway, it’s neat they found and fixed, supposedly, some real bugs. I’m curious how many fake reports they had to sift through to find any real ones.

He writes 100 and that he did not yet sift through them.

But I am wondering the same. How often he had to run the same command and how long to find the right prompt

Real vulnerability or hallucinated?

Given Nicholas Carlini work at anthropic I would wait for another person to confirm this.

The research method is just pointing file by file and asking an LLM if any vulnerability exist and reminds me of the person who bugged ffmpeg devs with vulnerabilities on niche non enabled codec decryption.

reminds me of the person who bugged ffmpeg devs with vulnerabilities on niche non enabled codec decryption.

That was google.

I’m really scared about what AI is going to do to the world, but I think it’s here to stay.

Hopefully it’s actually finding real bugsIf I ever received a vuln report from an AI, or other such glorified spreadsheet, I would promptly dismiss it then wait for a human to organically discover it on its own to consider that as proof of actual existence.

If the bug was actually legitimate, and was verified, I don’t think its a good idea to just wait till someone actually experiences it.

Of course this depends on the severity of the bug as well. In the case of this article, he was refusing to submit anything until he actually verified it, but he defo was using the AI as a origin of discovery.

I would prefer those types of reports over blanket AI vulnerability reports that aren’t proven. Discrediting a valid bug because it was not human generated may lessen workflow, but it’s at the cost of your software’s security and reliability.

I agree I would throw out reports that are AI driven & not proven, but if someone did the actual PoC and demonstrated actual risk I wouldn’t care if it was originally AI or not. I would just assign it based off severity like normal.

Letting your users get hacked just to own the AIs is certainly a strategy.