Basically, I was super tired getting home from an event last night and didn’t even notice the water hadn’t stopped flowing normally. It’s quiet so I don’t normally hear it.

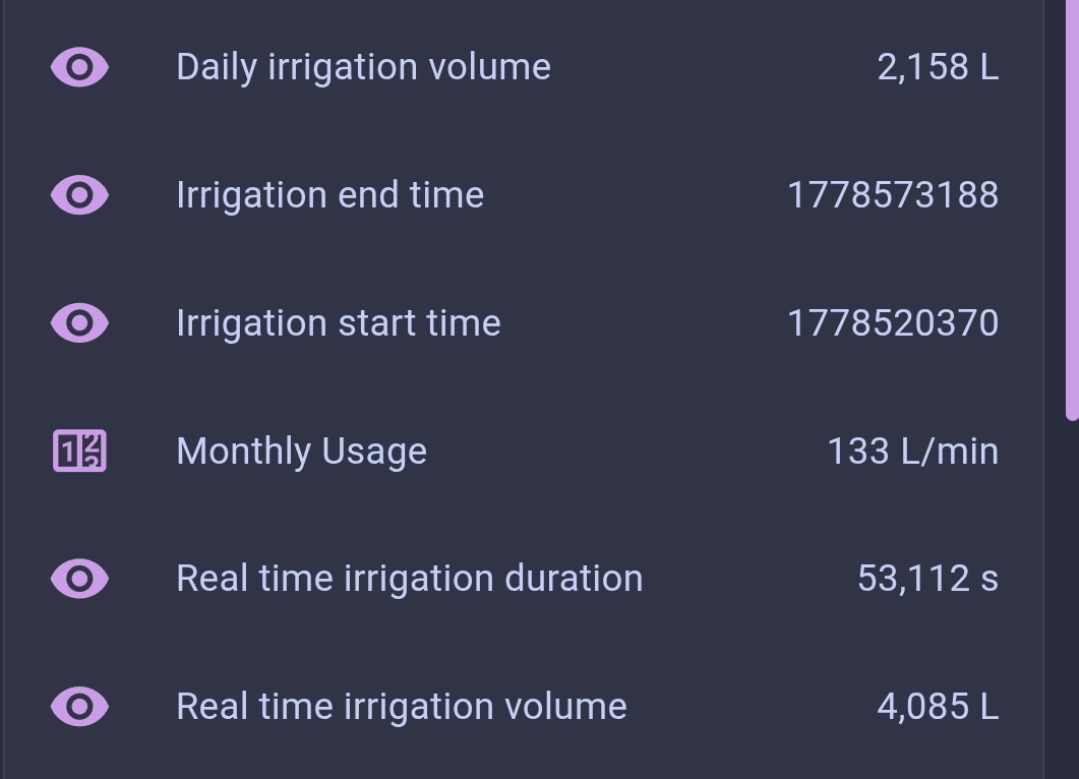

Apparently at some point a couple hours in a fitting in my drip setup blew, flooding my peppers planter entirely and in the process burning through nearly 4000 liters of water before I caught it on my way out the door to work this morning.

I’ve since learned there’s a Z2M command to start watering on a timer, but I didn’t know that before and trusted Whisper to not fuck this up. My fault.

Don’t think my water bill company will be amenable to it. I’ll call and ask but, fuck.

Lots of good suggestions here, I’ll also thrown in that a basic standalone automation can help prevent the worst also:

if valve not "closed" for > 45min set valve "closed"Edit: use

not closedinstead ofopento prevent briefunavailablestates from messing with it.What are you, a data center?

Thank you, I needed the laugh for this x3

Hopefully my peppers aren’t dying from being drowned, nor my other plants. Guess I gotta feed them when I get home from work!

Hope everything survives and thrives for you! I have a neonatal homeassistant setup that I am always leery about expanding beyond lights and the entertainment system.

Rule 1 of automation: check the code before you run the code.

Rule 2 of automation: watch the code run.

Rule 3 of automation: repeat step 2 until anxiety fades.

I do all three every time I do something new, it’s basically mandatory for me. Every time I did it correctly did numerics tested over and over via voice itself. Timers always behave and require that as well.

This was just the first time, after anxiety had faded, it had failed to transcribe in a useful fashion.

Makes me want to look into more-predictable STT systems.

Honestly, luck of the draw sometimes. It works 99 times, then goes “fwoom” the 100th.

deleted by creator

To prevent things like this from happening, you can create alarms in Grafana to notify you of unusual behaviour. Something along the lines of “If water consumption in the last 6 hours is > 10L / hour, fire the alarm and notify me”.

I like to think of stuff like that as an error culture: errors are bound to happen, be it a wrong Whisper transcript, a broken pipe, etc, and that’s okay. You can’t never be fully sure you are error-free. What you can do is create a culture that can catch these errors in their early stages, without having to wait, say, a whole month for the water bill to be astronomically expensive for you to say “Hey, something’s wrong” (that wasn’t the case here, but bear with me).

Reporting like that really is something I should be doing more of. I think about it lots at work and when I go home I guess I often turn off that part.

Field validation. Rookie mistake from the dev.

I set it up as just a sentence automation, not as a separate script being fired where that’d have caused an error. I’ve not found a means of forcing validation like that in sentence automations, only in custom sentences/intents (never quite gotten a handle on those)

Template Conditions in that case. Ensure it falls between a specific range when ingested as an intent, and reject if not within that range. Should help you avoid future issues like this.

Yep. In the fix I linked I set it up to default to a word if it fails the template instead, so it’ll then check for a match to that word (“fail”) and if so it just falls back to 5 minutes and will tell me as much.

Going to be a hell of a lot better about that in the future though. Was definitely a rookie mistake on my part to assume that just because it’d worked every time I’d tested it, used it, and with timers and all thus far that it would always work 100% of the time. Why assume when you can validate? I do in code I write… Guess I don’t think about it in the same way with HA as much, oops.

👍

Ah, more AI-caused problems. Love to see it.

Speech to text, not AI. No one called it AI until the tech execs started purposefully conflating them all to make folks who say they’re “anti-AI” look bad against all other machine learning. And now even fucking Nuance calls DragonSpeak “AI” even though it’s always been machine learning-based since it first started.

It’s one of those stupid things that would just need a toggle to force transcription context. Probably works flawlessly with Nabu Casa’s STT, but even though I pay them for it I want it local. Hell, I’m pretty sure Dragon even has (or had) switches for forcing certain processing methods.

Machine Learning IS AI! You got yourself confused with marketing schemes yourself.

https://en.wikipedia.org/wiki/Machine_learning

And yes it was called AI before. It was just not in everyone’s mouth.

Yes, I’m aware that ML is a field of computer math artificial intelligence. But purposefully trotting that out is tantamount to doing unpaid work for Sam Altman at this point since in parlance nearly everyone uses “AI” as shorthand for modern generative AI systems as well as the hype itself.

Which thus…no, it wasn’t called AI before, it was generally referred to as machine learning or neural networks with that implying it was AI (math) before it became labeled as AI (hype).

This feels like really unnecessary pedantry.

AI is the perfectly fine umbrella term for it. It was used forever in terms of ML. Just go back to the first entry in the linked Wikipedia entry and you will find that its literally the first sentence.

Just because you feel to have negative feelings about a scientific term, does not make it ok to claim it does not belong to the same or a related group.

deleted by creator