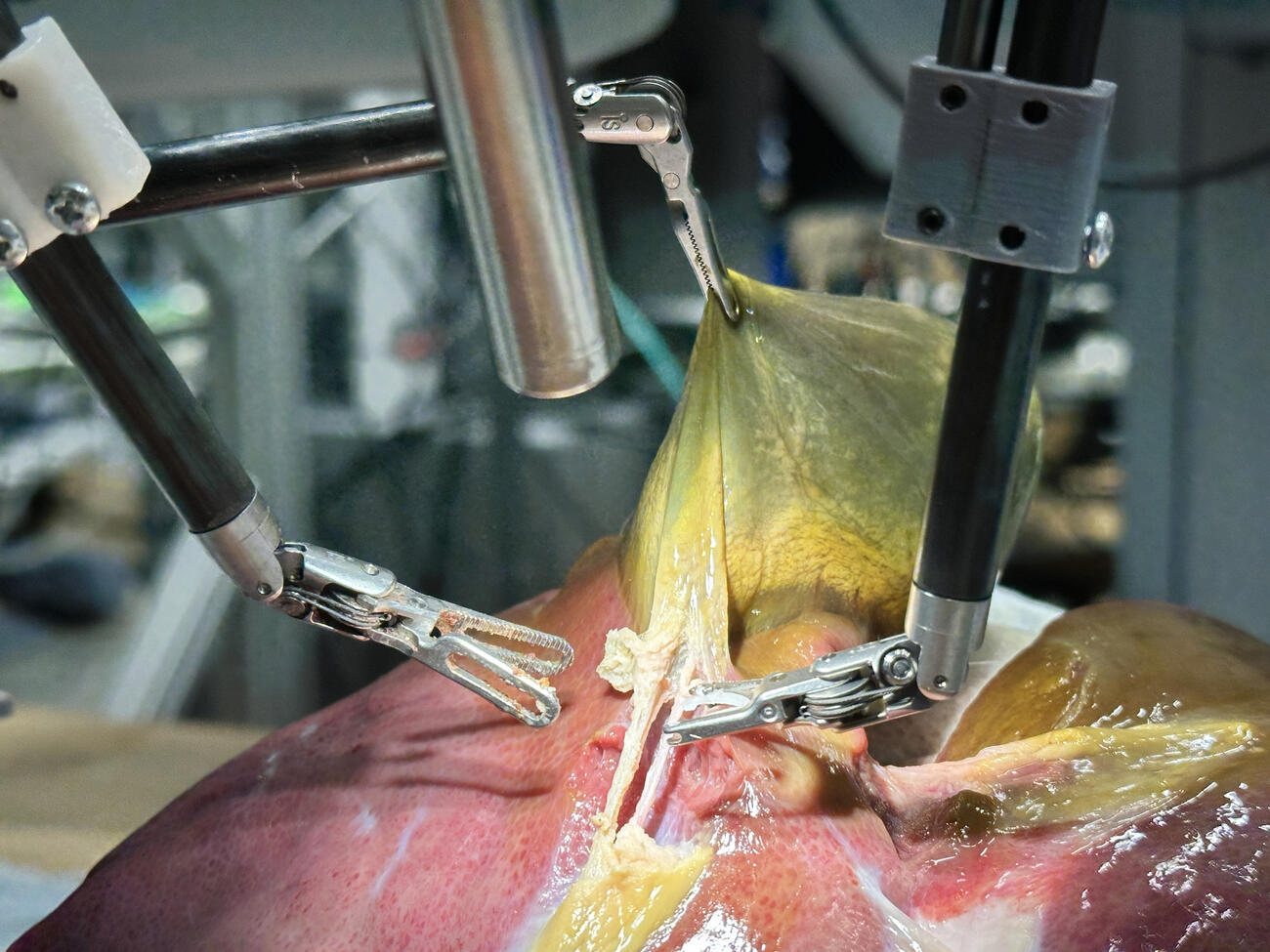

A robot trained on videos of surgeries performed a lengthy phase of a gallbladder removal without human help. The robot operated for the first time on a lifelike patient, and during the operation, responded to and learned from voice commands from the team—like a novice surgeon working with a mentor.

The robot performed unflappably across trials and with the expertise of a skilled human surgeon, even during unexpected scenarios typical in real life medical emergencies.

See the part that I dont like is that this is a learning algorithm trained on videos of surgeries.

That’s such a fucking stupid idea. Thats literally so much worse than letting surgeons use robot arms to do surgeries as your primary source of data and making fine tuned adjustments based on visual data in addition to other electromagnetic readings

Care to elaborate why?

From my point of view I don’t see a problem with that. Or let’s say: the potential risks highly depend on the specific setup.

Being trained on videos means it has no ability to adapt, improvise, or use knowledge during the surgery.

Edit: However, in the context of this particular robot, it does seem that additional input was given and other training was added in order for it to expand beyond what it was taught through the videos. As the study noted, the surgeries were performed with 100% accuracy. So in this case, I personally don’t have any problems.

Yeah but the training set of videos is probably infinitely larger, and the thing about AI is that if the training set is too small they don’t really work at all. Once you get above a certain data set size they start to become competent.

After all I assume the people doing this research have already considered that. I doubt they’re reading your comment right now and slapping their foreheads and going damn this random guy on the internet is right, he’s so much more intelligent than us scientists.