- cross-posted to:

- privacy@lemmy.ml

- cross-posted to:

- privacy@lemmy.ml

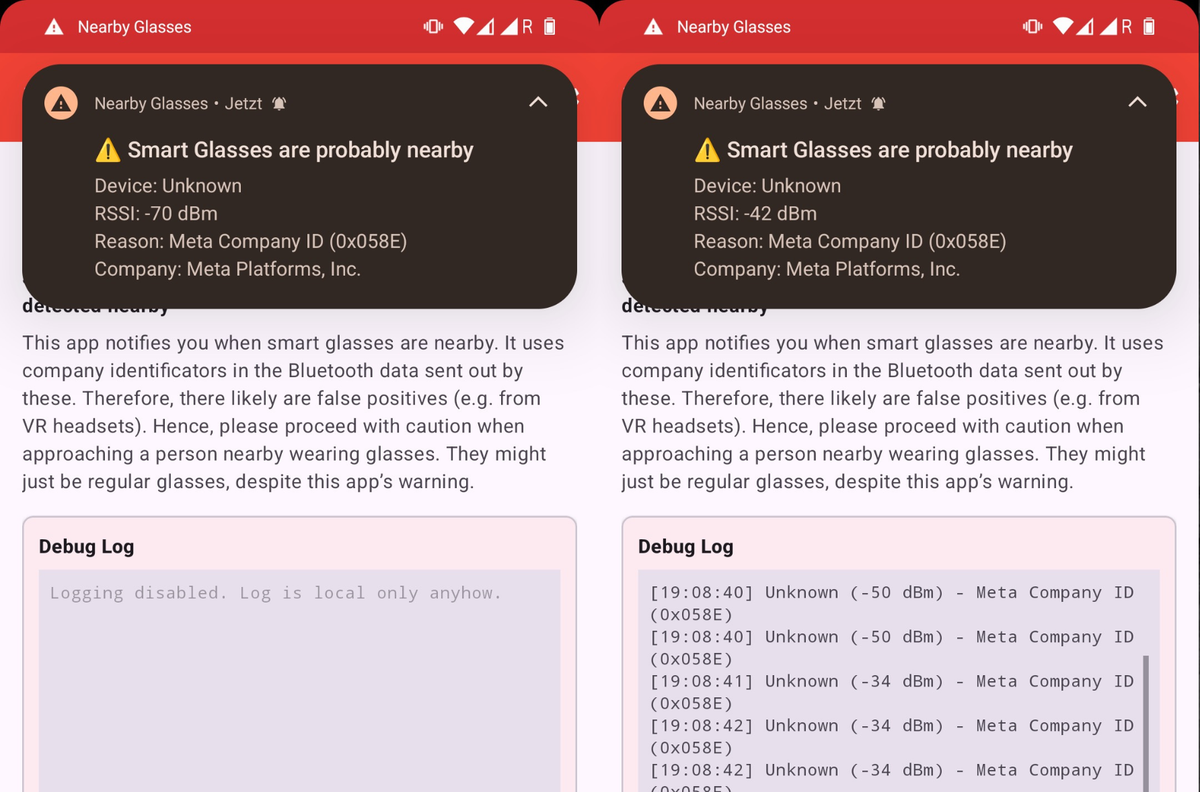

The creator of Nearby Glasses made the app after reading 404 Media’s coverage of how people are using Meta’s Ray-Bans smartglasses to film people without their knowledge or consent. “I consider it to be a tiny part of resistance against surveillance tech.”

more at: @feed@404media.co

You know what sucks?

In that AR glasses, in theory, are such an interesting technology with lots of potential, and certainly a piece of tech I would love to have and work with and on. Not to secretly record people, but to, well… augment my field of view with whatever digital tools or displays I would like. It would be so useful

It’s honestly kinda saddening to me that it most likely will get completely ruined by our current toxic relationship to technology. A step towards our ever increasing cyberdystopia, and not towards enchanting our limited lives

Obviously either way I don’t trust Meta, but an open-hardware device running a FOSS AR system? It would be nice…

I still hold out hope that this somehow could be resolved, and I would love to contribute to open software for these devices. Maybe one day soon-ish I will. My expertise should be well applicable, after all

It would be incredibly useful in construction. Having a digital overlay telling you exactly where to put up the framing for a separating wall, or an overlay showing the correct distance between screws, or where wires and pipes are inside a wall? There are so incredibly many awesome possible uses for AR in construction.

I always wanted to build an AR app for inside data centers. Imagine looking at a server and being able to open a terminal or desktop that you can immediately interact with on the floor. or have it display resource information like hardware utilization, temps, network throughput and configuration, etc.

it would make a difficult job just bit more manageable.

I really like the special tagged tape that could bring up AR tags and details about it. Organization and directions are so more useful.

It would be so cool to have something like this integrated into your monitoring platform. Imagine being able to “tap” on a switch in a rack and be able to view it’s mac table or port assignments

Pretty sure that already exists.

But it is mainly used for solving hardware problems where a technician can film whatever they are working on with their phone, and a remote technician can “draw” in AR on the image in real time to point towards the things that need manual interventions.

I’m in the AEC industry. Almost any implementation of on site augmentation sucks ass most especially because the tech nerds making them have a really hard time truly understanding the needs OF tradespeople and installers.

Almost all of them are top down implementations meant to assess tooling and field quality rather than actually acting as an overlay aid in construction (which, like, 90% of tradespeople worth their salt don’t actually need FYI).

Also, I’ve found, most of these tech nerds making this shit don’t know how to actually put a building together and are constantly flummoxed by the methodology.

I’ve worked in construction, and now work as a CAD specialist, so I know your pain, but the problem with “how to actually put a building together” is a very wide issue, also present with engineers and architects.

It’s already used in construction as a documentation device. Photos are big as a documentation tool and some inspectors already use wearable cameras as a tool.

ok, but they don’t try and hide them do they?

I know one engineer who bought the Meta glasses due to the form factor. For others with the Go Pro, they usually mount the cameras on their hard hat, which makes it easy to see since black hard hats are rare.

Or playing Pokémon GO

Sure, that creepy 48 year old on the subway is just looking for a Charmander.

They are used for that kind of applications already. You put one of those on, and some technician remotely guides you in doing some maintenance while looking through your eyes. They can mark things in your fov, show you diagrams, whatever. Pretty neat actually.

Unneeded. We already have a tool for that it’s called blueprints, they haven’t failed in over 3000 years.

Blueprints don’t fail, people really really often do though. People measure wrong, or build on the wrong side of the line they’ve drawn. It’s not a question about “Is it essential”, it’s a question about “Will it make it easier, faster and less errorprone”.

Drop the cameras and microphones and replace them with a couple accelerometers and gyros. Paired with your phone’s GPS tracking, the glasses can tell where you’re looking without actually seeing anything. You can get handy features like a floating ‘turn here’ sign over your exit while driving with GPS navigation without recording anyone or anything at any time. Better battery life, too.

Tbh I don’t even mind cameras that much if they were entirely controlled by the individuals themselves. I have a much bigger issue with it when you’re streaming my facial recognition data to Evil Megacorp 2™ servers that also feed directly to the “Not Spying… Again” agency, though.

Is that what you’re referring to? https://futurism.com/artificial-intelligence/meta-facial-recognition-glasses These people have no ethics and no moral code. They know we’ll hate it, so they want to sneak it!

What about a camera that is covered by a cap that can only be lifted if they press the mechanical button on the side of the glasses?

You could still film things like posters to get more information about the event, while not filming everyone all the time.

I don’t think that would work particularly well with AR: People get sick if movement isn’t synced up properly, not having any sort of cameras or sensors at all would exacerbate that problem.

If you are talking about a simple HUD, then that might be a lot more viable, but for AR and the tech we currently have, some sort of camera or sensor array is kind of a requirement practically speaking.

See, I don’t really want full AR. I want a HUD, a very small number of rudimentary AR features, like floating windows for text documents or videos, physical buttons on the arms of the glasses, small drivers by the ears for audio, and battery life that will last most of the day. I already have to wear glasses and if I’m paying more for extra features I want ones that will last the whole time I might want them, not just the six or so hours a day that the current offerings have.

If that’s all you want, making something that just clips onto your existing glasses might be pretty viable.

Except that one cool thing with AR is being able to have it tell what you’re looking at is. Not just positioning things in space. A lot of cool shit that could be done with AR, like real time text translation, object identification, etc needs some kind of camera, even if it just sees IR light. Lotta cool shit needs a microphone, too.

GNSS isn’t really accurate enough for this, especially in urban environments where there is poor line of sight to most of the satellites.

Using an AR display on those glasses with frames that thick is such a horrible idea. Google was on the right track with the HUD displayed on a frame-less prism that doesn’t block half your vision.

Last thing I’d want is to be in the middle of something with my hands full and the display bugs out, blocking the one eye, making me screw something up.

Maybe don’t cause your own problems.

I mean, that was sorta the point of the comment…

I don’t like them and therefore, I won’t be using them, ever. I’d get a less obstructing headset instead. And, I wouldn’t get a headset just to play around with it, I’d actually want to use it and try to get to it help me doing things.

It’s only a matter of time before someone is arrested on suspicion of voyerism and there is evidence of him staring at some girl’s boobs.

Every guy does this, but “Looking at cleavage is like looking at the sun. You don’t stare at it. It’s too risky. Ya get a sense of it and then you look away!”

-Jerry Seinfeld.

I agree, it would be nice.

The truth is that we already are living in the surveillance state and people are just going to have to “get over” being recorded in public by anyone that walks by.

I don’t like it either. But that’s the reality we’re entering into, where privacy isn’t a right but a privilege and that privilege does not exist save for some very select (if any at this point) places like your home … Maybe.

No, people do not have to get over that. People need to stand up for their rights. Being in a public space isn’t justification to have your movements recorded and logged 24/7. Stop being the fucking knee you coward.

I’m just being realistic about the future. You already are carrying around a machine that’s listening and watching. You’re walking into and out of stores where you’re on camera. Hell you’re driving past however many cameras in your car or walking past them on the street, every business, every office, every space has cameras now.

Thus, I think eventually more and more augmented reality devices will be seen because people will come to appreciate their uses outside of just being recording devices once that concern is overcome. In other words, wearing AR glasses won’t get you default labeled as some perverted weirdo.

You don’t need to bend the knee but we’re past the point where there should be any expectation of privacy in public spaces. I’m not saying I like it, I’m saying I expect our society to continue to move towards a surveillance model where privacy simply cannot be expected in any public space.

Do I think it’s dystopian and bad, yes, yes I do. I also think we need strong privacy protections for our private domiciles. That doesn’t mean my opinion is aligned with what actually is going to happen in our world.

I don’t want it but it’s what is going to happen and has been happening.

While I agree that AR glasses will become widespread, there’s still time to advocate for and implement privacy focused regulations. Especially early on as people are upset about the technology

While not perfect solutions, enforcing stuff such as recording LEDs and such are steps in the right direction

I was picking up my new prescription glasses this week at a large mall. They had Ray Ban and Oakley Meta glasses and the clerk said they have not sold a single pair.

That’s all I read. C.O.W.A.R.D.

You’re a silly person, aren’t ya.

Yeah fuck me for acknowledging AR glasses or other AR tech could be very useful but it’s being limited by our privacy concerns that are basically theater at this point.

Truly I am so cowardly Mr big internet man.

What does this even mean? You gonna punch every other person you see?

Fuck off with your disingenuous comment

deleted by creator

You don’t need to actively punch everyone you see. Just punch the Nazis. For the privacy part, what one could reasonably do once the tech becomes affordable is to eg.: wear passive EMP devices or something similar that disable cameras near you (at some point, the tech has to fit in a space too small for EMP shielding).

If you want to bend and spread, you do you. You don’t have to tell us to “get over” it and share your fetish. That’s a not-nice thing to do.

I’m just being realistic about the future. You already are carrying around a machine that’s listening and watching. You’re walking into and out of stores where you’re on camera. Hell you’re driving past however many cameras in your car or walking past them on the street, every business, every office, every space has cameras now.

Thus, I think eventually more and more augmented reality devices will be seen because people will come to appreciate their uses outside of just being recording devices once that concern is overcome. In other words, wearing AR glasses won’t get you default labeled as some perverted weirdo.

You don’t need to bend over and spread but we’re past the point where there should be any expectation of privacy in public spaces. I’m not saying I like it, I’m saying I expect our society to continue to move towards a surveillance model where privacy simply cannot be expected in any public space.

Do I think it’s dystopian and bad, yes, yes I do. I also think we need strong privacy protections for our private domiciles. That doesn’t mean my opinion is aligned with what actually is going to happen in our world.

I don’t want it but it’s what is going to happen and has been happening.