Im definitely on the side that over using AI and using it commercially seems to be bad. On the other hand, it seems like a tech that has huge potential upsides. I’m not sure we can achieve a post scarcity society with all labor being done by humans. This is where I see AI becoming a massive tool. Assuming we can pair it with mechanical means of work, not strictly digital. I know it’s a touchy subject but I want to hear your opinion. As always, if you’re just going to tell me to read more, recommend literature.

Machine learning by itself is already paying off. LLMs the way normal people use it are fine, but not the way bosses want to use it - you can’t rationalize away employees with this, you can only give them them a tool that empowers them; there will be a lot of heads rolling in management in the corporations where this hasn’t been realized yet.

Code Generation might get better over the next years, but looking at the current trajectory i would say with current tech there will never be a point reached where you can simply replace a dev with an agentic AI without the generated code being full of inefficiencies, bugs and security issues.

It also might open up the first therapeutic LLM (without the current fuckups) if there is a focus in development - THIS would be something that is labor intensive, priced so that exactly the people that need it can’t afford it on a regular basis, and definitely possible to attain without much of a technological limit.

ImageGen can also pay off in some areas - creating tons of “stony wall” textures isn’t fun, but implementing a tiny model that creates as many “stony walls” as you want in your game with differing amounts of stones or dirt as a variable might be worth the effort.

Prototyping is also a big thing in both of those areas (Code Gen/ImageGen), but i think that’s no secret anymore.

Videogen in the current way is a waste of energy. The models need something that anchors them, to make sure that the Coke truck in one camera angle stays the same Coke truck in the next angle; currently it’s just ImageGen *25/second, which causes those issues - and the massive energy consumption. This is the only area where the generation process itself chews through more electrons than the global energy bill allows for. Someone smart will probably crack that nut too - i believe that the solution to those 2 issues (energy consumption and missing object permanence) might be linked.

Edit: The missing permanence might also be a reason for many of the issues of LLM’s, some kind of “self” with a sense of the passage of time to return to. I’m pretty sure i’m not the first one who thought of that, and there are probably a lot of people with even more PhDs at work here.

There are also serious gains to be made in science on the back of AI models, just not the flavors most people are familiar with.

https://pmc.ncbi.nlm.nih.gov/articles/PMC8633405/

Edit: The missing permanence might also be a reason for many of the issues of LLM’s, some kind of “self” with a sense of the passage of time to return to. I’m pretty sure i’m not the first one who thought of that, and there are probably a lot of people with even more PhDs at work here.

Image generation uses a concept of a LORA that is a bolt-on model to augment a base model. It can provide support for additional tokens (those map more or less to words and concepts), or bias the base model on existing tokens. For now, that’s probably as close as you’re going to get to anything resembling long-term-memory on an LLM.

Of course, i was just fixated on the GenAI-Aspect of the question. Climate modeling, Medicine (especially diagnostic and neurosciences), physics, chemistry and even social sciences can benefit a lot. In the manufacturing process it can be used for control of industrial processes (e.g. balancing of a chemical process; i know it’s used in QC in production of electronics). This IS the next big thing, but not in the way the corpos try to sell it to us.

Yeah, for the moment, this is it. We will need more research in how to actually read the data inside of a neural network to be able to a) reroute pathways that are problematic and b) enable a process to hook inside of control points to modify it on the fly.

Overall no but mainly from wasteful business usage without any real return. If an individual uses one instead of like streaming video or such to entertain themselves then im not sure they are not using less electricity than they otherwise would. If they are using it for searching and can get a response that is equivalent to several searches in one query then it just might break even. I they are minipulating images or creating content and they otherwise might use other software to do. Well I don’t know as I have not done a comparison and I have a suspicion that its to variable to get a good call but I would not be surprised if it ends up being pretty even. The other real problem is people doing things they otherwise would not. So before people who spent a lot of time learning and getting good at it made digital art but now its whoever and many are making things of far less value than they think it is. Also they might tell it to do the same thing again and again and it pretty much remakes the whole thing whereas a human would be just editing it a bit. The result is a bit like graffitti. A lot of garbage for the resources used but sometimes someone here and there does something good.

I don’t believe that the current definition of AI (LLM/Generative) will ever live up to half the hype. If I knew how, I’d try to make money from the hype imploding.

I even more confidently believe that it will not lead to a post-scarcity society. But most of that belief is because I don’t think humans are capable of developing such a society.

Some things are inherently scarce. You only have 24 hours in a day, and there are only so many places you can build a house.

Why don’t you think humans could develop post scarcity?

Greed. Case study: insulin.

Case study: almost every other wealthy democracy in the world besides the US and how they deal with insulin. Living in a wealthy democracy and not being able to afford insulin is a uniquely American problem.

And the most absurd thing is, only a part of the people think it is a problem to begin with.

i do think humans can develop post scarcity.

i just don’t think pedophile-made LLMs are it.

Supposedly there is some cool stuff going on in the medical field where the AI can identify abnormalities in scans better than doctors. But it’s obviously never going to be able to think.

I work in biomed R&D, and specifically spent several years in Radiology.

Industry consensus is that CAD occasionally picks up anomalies that a radiologist would have missed, but the false positives it picks up are noisy enough to largely offset that benefit. It’s fine if used as a second pass to catch areas a human missed, but doesn’t actually perform “better than a doctor” in a vacuum, precisely because it’s not thinking for itself and e.g. cross referencing the imaging against clinical history.

I think that’s just pattern matching like facial recognition. It covers more imaging in less time and can help identify areas of concern. But that doesn’t need trillions of dollars.

Facial recognition is also AI, though.

That’s why I said originally generative ai and LLM

A deep learning model can tell biological sex from retina pictures, but not even the best eye doctors can. You feed it a pile of images labeled “these are from men” and “these are from women,” and it figures out the differences and applies that knowledge to pictures it’s never seen before. As far as I know, we still don’t know what exactly it’s picking up on - or if it’s even something a human could distinguish - but for an AI it’s not a problem.

I think the term “AI” has just been a bit stained by all the people conflating it with GenAI. Yes, GenAI is AI, but the term AI covers all kinds of systems, and GenAI is just one subcategory.

No

No; in fact, I don’t think the people behind AI care about the future at all. They’re just trying to grab what they can in a hurry and dip when the bubble pops. They’ll fuck off to the Caribbean or something like that and live off the riches, and let us clean up their mess.

Bummer

Useful AI, as in good pattern matching for science and engineering are already benefits.

LLM and genAI slop will never be a net benefit to anyone other than cheapass executives and shareholders because their models are fundamentally dogshit. Both just regurgitate shit they were trained on and their massive cost overhead currently being covered by investors will never end up being profitable. They can’t learn anything new without the massive training costs and that will be a perpetual cost based on their design.

Plus if they are as successful as they are marketed to take all the jobs the economy would collapse since nobody would have income anymore. This isn’t comparable to automating manufacturing or farming or other industries that output physical goods. This is automating paperwork and advertising which doesn’t matter if nobody is working.

The kind of AI you’re talking about that could replace human labour to the point of making something like a post-scarcity society happen isn’t even on the horizon, and I don’t believe the llm hype has any chance of leading to it at any point. But even if it were the case, imo there’s no scenario where capitalist societies transition to a post-scarcity utopia where human labor is replaced by machine labor through technological innovation.

The 19th and 20th centuries are a history of human labor being displaced rather than replaced by innovation. People are made obsolete in their own jobs, but the fundamental threat of “work or starve” remains structural. So people who are older, already specialized and who can’t easily change occupations become atrociously poor or straight up die, and younger people find and invest their formative years in new ways to work, producing stuff that’s not yet automated.

So before you can even think about a post-scarcity utopia you need something like ubi and a socialist organization where people who get innovated out of their jobs can still live, but if you have that kind of society I think it would naturally orient itself toward degrowth and production of what is needed through human labor, rather than the kind of overproduction frenzy necessary for everyone’s labor to be continuously replaced with metal and silicium.

A lot of what ai is good at is very useful for automating massive state surveillance programs. AI could monitor live audio and video communication for problem phrases, for example.

Making authoritarian rule easier and cheaper is a very valuable capability to elites, though detrimantal to the world.

I think the main effect of ai will be continuing this deadly trend of power consolidation that we’ve seen since maybe the mesolithic. The power of information will be conslidated in fewer and fewer ai-oligarchs, allowing ever more intrusive states to control more people more precisely.

No. The story of hardware development is a fucking legend, it’s just tarnished by how completely fucking inept we are at using the gains. And it’s apparently getting worse all the time - my mind boggled when Electron of all things turned standard, because I would’ve thought putting Chrome into everything (including low power scenarios) was an obviously fucking blitheringly idiotic idea, but here we are. LLMs have the same problem except probably orders of magnitude worse. Aside from possibly getting worse at developing better performance, we usually seem to beeline for a way to waste as much of it as we can. Moore’s law, of course, is long dead.

Honestly, no. Maybe I’m just the old man yelling at the cloud here, but I only really accept the use of local AI as somewhat ethical.

These AI datacenters have caused enough harm already.

A true AGI would be the ultimate labor-saving device, but the two main issues are that we have no clue how far away we are from reaching it - and we also have no guarantees that when we do, it’s going to end well for us.

It also might not be about more compute. The human brain is generally intelligent and it doesn’t need a massive datacenter to run it.

Your analogy is bad.

The human brain doesn’t need a massive data center, but neither does the compute to run a single agent.

We build entire cities to apply multiple human brains to problems.

Yeah that makes sense. Idk just seems like we need to figure something out.

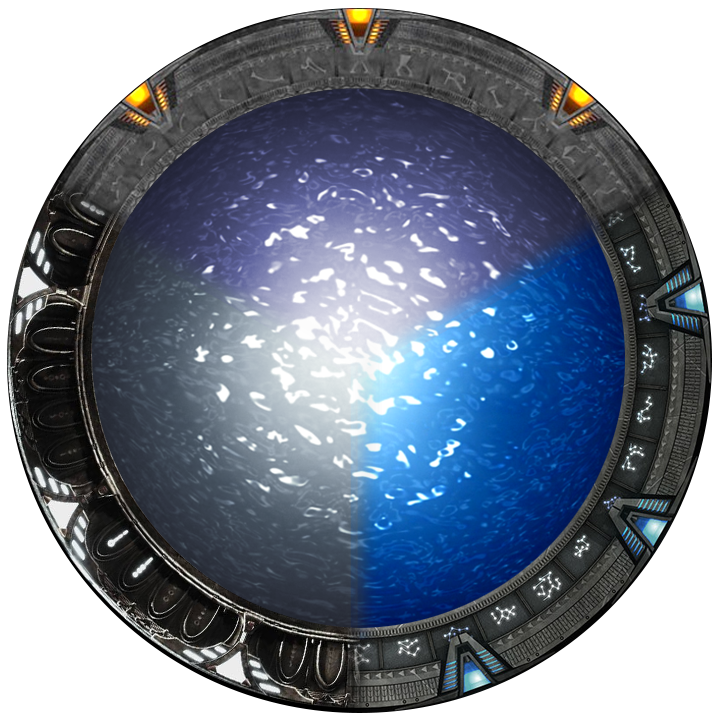

Yes, the end goal is to create The Director so we send a traveler back to tell us “THE OPEN AI PROJECT WILL FAIL, HUMANITY WILL BE DOOMED, DO NOT GIVE BIRTH TO SAM ALTMAN”

Idk how we’ll get a T.E.L.L on his mother tho… maybe get a geo-location on the landlines? Maybe the location of the actual bed in the hospital? Just be get a travelers in there and be like “Doc I change my mind, I know I’ve been carrying this for 9 months and its almost full term, but can I get an abortion right now?” 🤭

(Btw: you should really watch Travelers, it’s worth it I promise)

That was a great show

Thanks for the recommendation

Future capabilities will never offset resource drain. Only resource usage optimization can.

EVER is a long time.

The current implementation? Not unless they stoip training along the same lines they currently are. I think there’s some value, and you can access it pretty easily with the open source freely available models that are out there and some semi-decent hardware, but hundreds of billions to trillions in revenue for multiple corporations? Nah.

They’ll maaaaybe mitigate it by shifting people away from home computing and into connected systems, but I suspect the moment the bubble pops or hardware production levels off with their current demand people will end up realizing they can run 90% of what’s being offered in a gaming laptop from 2020.