…Previously, a creative design engineer would develop a 3D model of a new car concept. This model would be sent to aerodynamics specialists, who would run physics simulations to determine the coefficient of drag of the proposed car—an important metric for energy efficiency of the vehicle. This simulation phase would take about two weeks, and the aerodynamics engineer would then report the drag coefficient back to the creative designer, possibly with suggested modifications.

Now, GM has trained an in-house large physics model on those simulation results. The AI takes in a 3D car model and outputs a coefficient of drag in a matter of minutes. “We have experts in the aerodynamics and the creative studio now who can sit together and iterate instantly to make decisions [about] our future products,” says Rene Strauss, director of virtual integration engineering at GM…

“What we’re seeing is that actually, these tools are empowering the engineers to be much more efficient,” Tschammer says. “Before, these engineers would spend a lot of time on low added value tasks, whereas now these manual tasks from the past can be automated using these AI models, and the engineers can focus on taking the design decisions at the end of the day. We still need engineers more than ever.”

Then I doubt they are running the mentioned most accurate, two-week-long physics solvers at this stage either. You only do that when you need accuracy. A quick simulation doesn’t take long.

I’m failing to see why the creative writing machine is better than a simulation set to ‘rough’.

The problem is that you saw AI and thought LLM.

Machine Learning is a big field, AI/Neural Networks are a subset of that field and LLMs are only a single application of a specific type of LLM (Transformer model) to a specific task (next token prediction).

The only reason that LLMs and Image generation models are the most visible is that training neural network requires a large amount of data and the largest repository of public data, the Internet, is primarily text and images. So, text and image models were the first large models to be trained.

The most exciting and potentially impactful uses of AI are not LLMs. Things like protein folding and robotics will have more of an impact on the world than chatbots.

In this case, generating fast approximations for physical modeling can save a ton of compute time for engineering work.

Other people in this thread say physics simulations are inherently chaotic. If an AI model is trained on inherently chaotic data, how will the results not be chaotic or not worse?

Because physically speaking, chaotic and unpredictable are two different things - and why it works so well on this case: it’s becoming a stochastic problem, not a deterministic one.

It’s an awesome area for machine learning: you didn’t need to understand the result and how it got created, it just needs to be “close enough”.

The universe is chaotic. But chaos doesn’t mean something isn’t reproducible or doesn’t follow a set of rules.

That is an insightful question.

The answer is that we actually understand chaos in a way. It isn’t unpredictable in general, it’s just hard to say how any given situation will evolve but we can understand a lot about how all systems will evolve.

I’m not good at explaining, but some content creators cover this topic pretty well. If you’re interested, here’s a video about it from Veritasium: https://www.youtube.com/watch?v=fDek6cYijxI

Watson beat Ken Jennings over a decade ago. Protein folding was already done too, the people who did it even won a Nobel prize for it a couple years ago.

LLMs being the most visible part of AI after over 75 years of AI, isn’t because they’re the biggest or latest or greatest or whatever, it’s marketing. Plain and simple marketing.

Protein folding is far from “done” lol. Models have gotten a lot better but there is still more to do.

I didn’t say it was finished. I said people had won a Nobel prize for having done it. It takes decades to win a Nobel prize. My point was that it had been done years and years ago, not recently.

Anfinsen won the Nobel in 1972 for showing that the amino acid sequence is what is responsible for the 3D structure of proteins.

Since then we’ve been able to take images of protein’s structures using xray crystallography but that is a painstaking process. The ability to accurately predict a protein’s structure from an amino acid sequence has been an unsolved problem until very recently.

It wasn’t until 2024 that Hassabis, Jumper and Baker won the Nobel for their work in predicting protein structure (using an AI called AlphaFold) and computationally designing new proteins.

The ability to create arbitrary proteins is new and will revolutionize some fields of medicine (like cancer treatment) and, to me, is a much more impressive use of AI.

LLMs are interesting but they are incredibly over-hyped as far as ‘changing the world’ goes, imo.

They won the prize for an AI they made in the early 2000s.

Probably more that it’s the only AI normal people will interact with regularly. Your average person isn’t going to run a protein folding application, but they will probably talk to chatgpt or use Google AI summaries.

I work for a company that’s been using machine learning software for slightly over a decade.

because it’s not a “creative writing machine”, it’s machine learning that’s been trained to run physics simulations. it has nothing to do with LLMs. we’ve been using systems like this for decades. including ones like Folding@home which have been instrumental in the development of many drug therapies for different illnesses.

your internal biases have clouded your critical thinking skills and your ability to competently and thoughtfully examine information you’re provided has been compromised. in plain english, like the AI techbros you despise, you’ve given up your ability to think.

It’s hardly their fault for thinking it was related to the AI LLM or multimodal models when in all actuality the article states that these “large physics models” may be any sort of configuration, including LLM transformers:

It seemed you really needed to take your frustrations out on someone else’s comment.

The unthinking AI haters are all over social media. They keep saying that AI can’t really think. But ironically, the “arguments” they usually use is the worst kind unthinking regurgitated groupthink slob.

Transformers can’t really think(at least not more then an excel sheet) oversymplified its a stochastic model, it gives propable result.

But that does not make it useless there are many tasks where propable with the right margin of error is good enough.

But there are also tasks where it isnt, even humans also have a chance for error they can be at fault for it / take responsibility.

This doesn’t sound like they are using LLMs for processing their 3D models. The way it’s described in the article sounds a lot more like some machine learning model trained on physics simulations for aerodynamics.

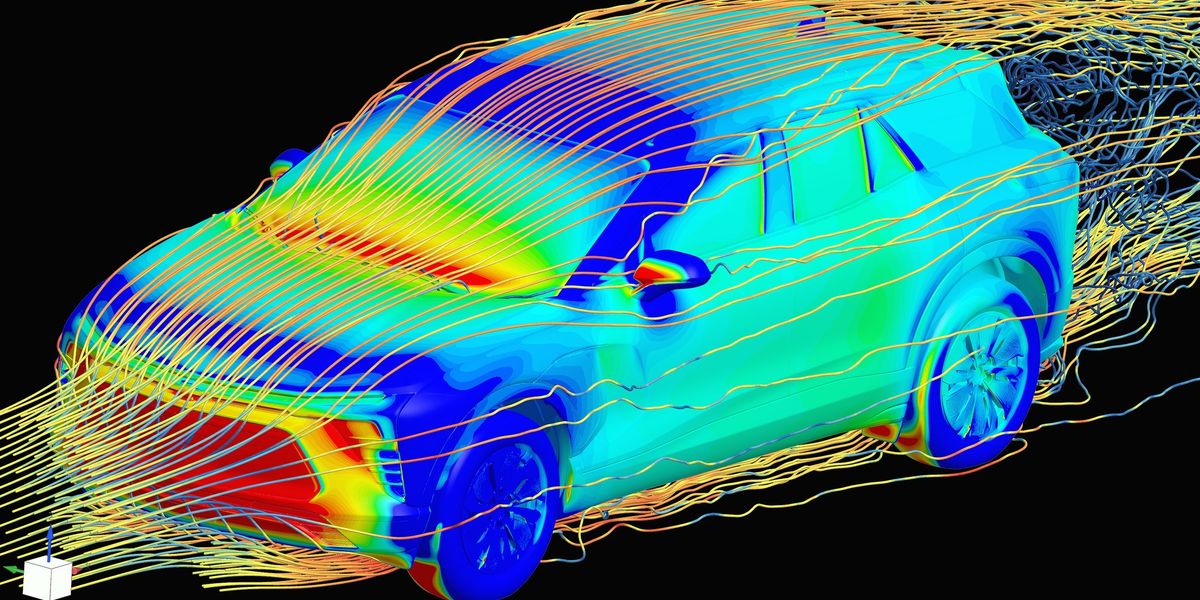

Yes! The way those physics models are created is so cool. The article somewhat explains it, but it’s mostly a fluff-piece for things unrelated to genAI. More in-depth:

The physically accurate simulation is great but slow. So we can create a neural network (there’s a huge variety in shapes), and give it an example of physics, and tell it to make a guess as to what it’ll look like in, say, 1ms. We make it improve at this billions of times, and eventually it becomes “good enough” in most cases. By doing those 1ms steps in a loop, we get a full simulation. Because we chose the shape of it, we can pick a shape that’s quite fast to compute, and now we have a less-accurate but faster simulation.

The really cool thing is that sometimes, these models are better than the more expensive physics simulation, probably because real physics is logical and logical things are easier to learn.

We’ve done things like this for ages. One way we can improve them is by giving them multiple time steps. Unfortunately they kinda suck at seeing connections over time, so this is expensive. Luckily, transformers were invented! This is a neural network shape that is really good at seeing connections over one dimension, like time, while still being pretty cheap and really easy to do run in parallel (which is how you can go fast nowadays).

With a bunch of extra wiring, transformers also become GPT, i.e. text-based AIs. That’s why they suddenly got way better; they went from being able to see connections with words maybe 3-4 steps back, to recently a literal million. This is basically the only relationship with “AI” this has.

It’s a super exciting time for so many fields of science. Transformers are really the key discovery that’s made modern AI what it is today and we’re only barely scratching the surface of possible applications.

The future is going to be weird in ways we can’t even imagine.

I once worked tech support for people who ran physics simulations. They said that sometimes they had to rerun the simulations if they didn’t come back accurately. I asked how they could tell if they were accurate.

They said it was based on whether it felt right. I still hate that response, but I guess I can’t come up with a better idea, other than doing whatever they’re testing in real life.

Those kinds of simulations are inherently chaotic, tiny changes to the initial conditions can have wildly different outcomes sometimes to the point of being nonsensical. Also, since they’re simulating a limited volume the boundary conditions can cause weird artifacts in some cases.

If you run a simulation of air over an aircraft wing and the end result is a mess of turbulence instead of smooth flow then you can assume that simulation was acting weird and not that your wing design is suddenly breaking the rule of physics. When the simulation breaks it usually does so in ways that are obvious due to previous testing with physical models.

That’s … Basically what they said.

No, they said “it felt right” which is incomprehensible to anyone that doesn’t have any experience with how a CFD results generally looks like.

I don’t have any experience with “CFD results” but the two sound similar to me. The ellipsis might have made it seem sarcastic, but that wasn’t my intent.

CFD = Computational Fluid Dynamics.

It is kind of what they said, you’re right. I was more pointing how how it could be that they could ‘sense the vibes’ of a CFD result to determine if it is accurate or if the model decided to do something weird. Since it’s a chaotic process and also an artificial one, the starting conditions can yield results that are impossible/not based on reality.

If you look at enough of them you start to notice the kinds of things that go wrong. They would also have a pretty good idea about how their design should perform and if the simulation shows different they’d first want to troubleshoot the simulation before attempting to re-design whatever system they’re creating.

Maybe you’re my old co-worker.

Regardless, I appreciate the detail! Thank you for elaborating.

I have friends everywhere.

I came here to say the same thing. There’s no way a coarse CFD simulation of air over a car takes two weeks to run on even budget HPCs.

Long fine ones take about 8 hours to run for the ones we do. More complex ones run up to 36 hours. Iterative coarse simulations take a couple minutes.

If they’re taking two weeks to run their simulations, I question their process.

My interpretation is that the two weeks is a backlog. The drag coefficient guys probably have a bunch of models coming in, and they have a bit of an inbox and yours gets in the queue.

Most businesses nowadays have a bit of a backlog. It’s more efficient that way, because you can keep the workload at 100%. So my interpretation is that a two week backlog would mean there’s a few other simulations ahead of you in line.

I don’t know if this is the full explanation, but the article does touch on how the LPM can be tweaked to match physical tests:

I’ll swag that the simulation process is complex, fraught with various pitfalls and idiosyncracies that require specialized training / experience to get it setup and running properly, even in ‘rough’ mode. I’ll further swag that the reason it used to take 2 weeks was because there were about 50 engineering hours required to get it ready to do the number crunching - and those engineering hours can now be handled by the “creative writing machine” which has been trained in the various things it needs to know to match the expected patterns.